Introduction

Open-source development ecosystems rely heavily on package managers such as Node Package Manager (NPM), RubyGems, and Pip. These tools provide developers with easy access to a vast library of reusable software packages, accelerating development timelines and reducing costs. However, the convenience of public repositories comes with significant security risks. Since the public develops these packages, often anonymously, they may contain vulnerabilities and malicious code or introduce indirect threats through their dependencies, posing risks to the software projects that rely on them. This post explores the most common security risks developers face when using packages from public repositories and how to identify these threats. We will also examine developers’ ethical responsibilities when using package managers and discuss how developers can help mitigate some of these issues.

Security Risks in Public Package Managers

One of the most prominent risks associated with public repositories is the presence of malicious or vulnerable packages. For example, the NPM ecosystem has been found to contain several security vulnerabilities, many of which arise from the extensive use of transitive dependencies, dependencies of dependencies that are automatically installed when a developer includes a package. These transitive dependencies significantly increase the attack surface, as vulnerabilities in even one can cascade to affect the entire project (Decan et al., 2018; Kabir et al., 2022; Latendresse et al., 2022).

Several incidents have highlighted the dangers of these vulnerabilities. In November 2018, the event-stream incident involved a popular utility library for working with data streams in Node.js that unknowingly incorporated a malicious dependency, leading to over two million downloads of malware (Zerouali et al., 2022). Similarly, the removal of left-pad, a small but widely used NPM package, caused widespread disruption, impacting thousands of projects (Zimmermann et al., 2019). These examples demonstrate how the interconnected nature of software dependencies in public repositories can lead to widespread security issues.

Identifying Security Risks in Dependencies

There are two primary ways developers can identify security risks in dependencies: direct and transitive analysis. Direct dependencies are those explicitly declared in the package manifest (e.g., package.json for NPM), whereas transitive dependencies are automatically included through the packages a developer explicitly installs (Decan et al., 2018; Zerouali et al., 2022).

Transitive dependencies are one of the most critical sources of risk. As projects scale up, the number of indirect dependencies grows, making tracking and assessing vulnerabilities difficult. Research shows that roughly 40% of NPM packages rely on code with known vulnerabilities, many of which stem from transitive dependencies (Zimmermann et al., 2019).

Developers can use tools such as npm audit, which connects directly to NPM’s known vulnerabilities database, or Snyk, a tool that provides real-time monitoring. These tools analyze the entire dependency tree, including transitive dependencies, and alert developers to packages with security flaws (Kabir et al., 2022). However, a challenge with such tools is the frequent occurrence of false positives, particularly for vulnerabilities in development dependencies that are never deployed in production. For example, npm audit may flag vulnerabilities in packages that are part of the development environment and are never included in the final production build. While these vulnerabilities are technically present, they do not threaten the production application because the flagged dependencies are not part of the final product (Latendresse et al., 2022).

To mitigate these risks, developers should:

- Regularly audit their dependencies with tools like npm audit and manually ensure required fixes are applied promptly (Kabir et al., 2022).

- Lock down dependency versions using tools like package-lock to avoid inadvertently updating to a vulnerable version (Zimmermann et al., 2019).

- Remove unused or redundant dependencies. Kabir et al. (2022) found that 90% of projects sampled (n=841) had unused dependencies, and 83% had duplicated dependencies, unnecessarily increasing the attack surface.

- Incorporate Software Composition Analysis (SCA) tools such as Snyk into the development workflow to detect vulnerabilities deep within the dependency tree (Latendresse et al., 2022).

- Apply “tree shaking” techniques to remove unused transitive dependencies from production builds (Latendresse et al., 2022).

Ethical Responsibilities of Developers and Educators

Developers have an ethical responsibility to safeguard the software they create and the users who depend on it. By using packages from public repositories, developers must ensure they are not exposing users to security risks. This responsibility ties into the ISTE standard 4.7d, which emphasizes empowering individuals to make informed decisions to protect personal data and curate a secure digital profile. Developers must prioritize securing their software, especially when the software manages sensitive user data.

One crucial aspect of this responsibility is ensuring the safety of third-party packages and educating others on best practices. For computer science educators, this involves teaching students how to assess package security and encouraging them to use secure alternatives. Educators should also model responsible practices, such as regularly updating dependencies and employing security audits in their projects.

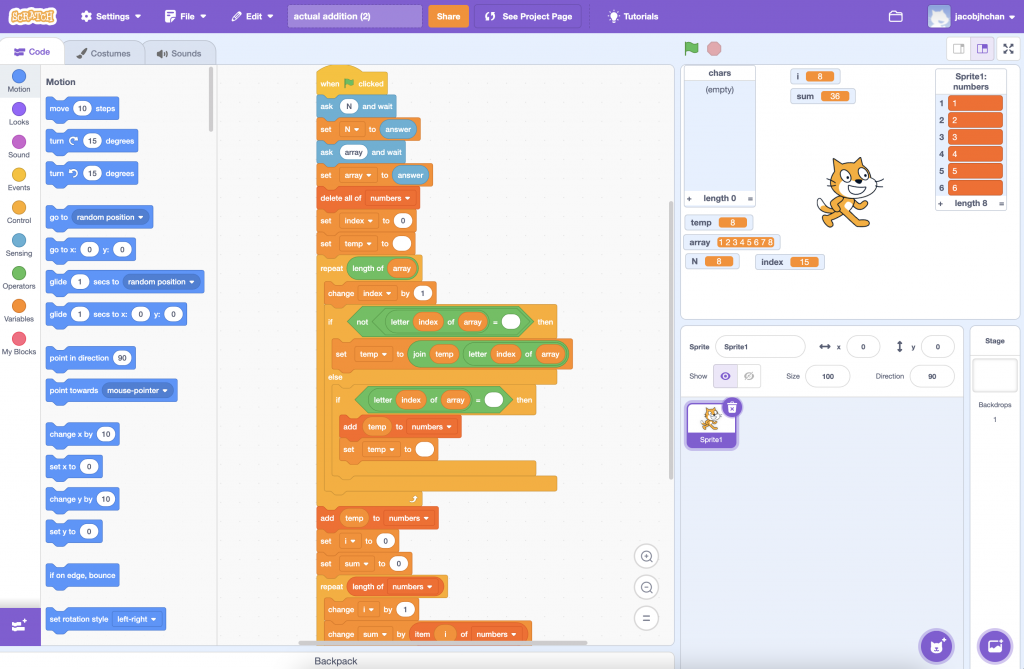

From an educational standpoint, understanding the security risks associated with public package managers can be incorporated into the SAMR model of educational technology integration. At the Substitution level, students might learn how to install dependencies using package managers. At the Augmentation level, they could explore using tools like npm audit or Snyk to discover package vulnerabilities. The Modification stage would involve students modifying code to replace insecure dependencies, while the Redefinition stage would design more secure workflows for integrating third-party libraries into their applications.

References

Decan, A., Mens, T., & Constantinou, E. (2018). On the impact of security vulnerabilities in the npm package dependency network. Proceedings of the 15th International Conference on Mining Software Repositories. https://doi.org/10.1145/3196398.3196401

Latendresse, J., Mujahid, S., Costa, D. E., & Shihab, E. (2022). Not All Dependencies are Equal: An Empirical Study on Production Dependencies in NPM. Proceedings of the 37th IEEE/ACM International Conference on Automated Software Engineering. https://doi.org/10.1145/3551349.3556896

Kabir, M. M. A., Wang, Y., Yao, D., & Meng, N. (2022). How Do Developers Follow Security-Relevant Best Practices When Using NPM Packages? 2022 IEEE Secure Development Conference (SecDev). https://doi.org/10.1109/secdev53368.2022.00027

Zerouali, A., Mens, T., Decan, A., & De Roover, C. (2022). On the impact of security vulnerabilities in the npm and RubyGems dependency networks. Empirical Software Engineering, 27(5). https://doi.org/10.1007/s10664-022-10154-1

Zimmermann, M., Staicu, C.-A., Tenny, C., & Pradel, M. (2019). Small World with High Risks: A Study of Security Threats in the npm Ecosystem. Www.usenix.org. https://www.usenix.org/conference/usenixsecurity19/presentation/zimmerman